Rather than allowing STP to block links until it forms a loop-free topology, Fabrics include an L2 multipath scheme which forwards frames along the "best" path between any two endpoints.

Brandon Carrol outlined the basics of an Ethernet fabric here, and his description leaves me with the same question that I've had since I first heard about this technology: What problem can I solve with it?

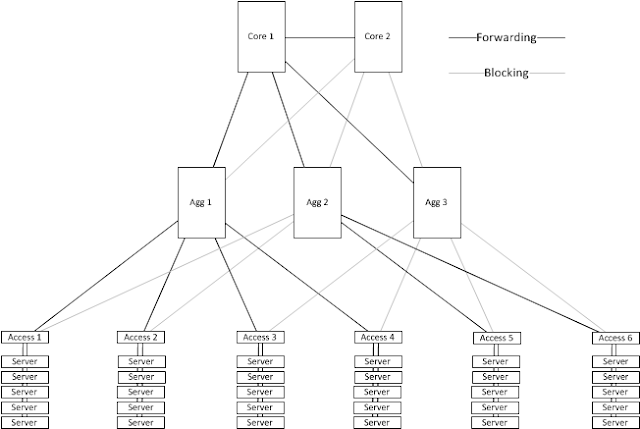

The lightbulb over my head began to glow during one of Brocade's presentations (pop quiz: what switch is the STP root in figure 1 of the linked document?) at the Gestalt IT Fabric Symposium a couple of weeks ago. In that session, Chip Copper suggested that a traditional data center topology with many blocked links and sub-optimal paths like this one:

|

| Three-tier architecture riddled with downsides |

might be rearranged to look like this:

|

| Flat topology. All links are available to forward traffic. It's all fabricy and stuff. |

The advantages of the "Fabric" topology are obvious:

- Better path selection: It's only a single hop between any two Access switches, where the previous design required as many as four hops.

- Fewer devices: We're down from 11 network devices to 6

- Fewer links: We're down from 19 infrastructure links to 15

- More bandwidth: Aggregate bandwidth available between access devices is up from 120Gb/s to 300Gb/s (assuming 10 Gb/s links)

If I were building a network to support a specialized, self-contained compute cluster, then this sort of design is an obvious choice.

But that's not what my customers are building. The networks in my customers' data centers need to support modular scaling (full mesh designs like I've pictured here don't scale at all, let alone modularly) and they need any-vlan-anywhere support from the physical network.

So how does a fabric help a typical enterprise?

The scale of the 3-tier diagram I presented earlier is way off, and that's why fully meshing the Top of Rack (ToR) devices looks like a viable option. A more realistic topology in a large enterprise data center might have 10-20 pairs of aggregation devices and hundreds of Top of Rack devices living in the server cabinets.

Obviously, we can't fully mesh hundreds of ToR devices, but we can mesh the aggregation layer and eliminate the core! The small compute cluster fabric topology isn't very useful or interesting to me, but eliminating the core from a typical enterprise data center is really nifty. The following picture shows a full mesh of aggregation switches with fabric-enabled access switches connected around the perimeter:

|

| Two-tier fabric design |

Advantages of this design:

- Access switches are never more than 3 hops from each other.

- Hop count can be lowered by running a cable

- No choke point at the network core.

- Scaling: The most densely populated switch shown here only uses 13 links. This can grow big.

- Scaling: Monitoring shows a link running hot? Turn up a parallel link.

Why didn't I see this before?

Honestly, I'm not sure why it took so long to pound this fabric use case through my skull. I think there are a number of factors:

- Marketing materials for fabrics tend to focus on the simple full mesh case, and go out of their way to bash the three-tier design. A two-tier design fabric doesn't sound different enough.

- Fabric folks also talk a lot about what Josh O'Brien calls "monkeymesh" - the idea that we can build links all willy-nilly and have things work. One vendor reportedly has a commercial with children cabling the network however they see fit, and everything works fine. This is not a useful philosophy. Structure is good!

- The proposed topology represents a rip-and-replace of the network core. This probably hasn't been done too many times yet :-)